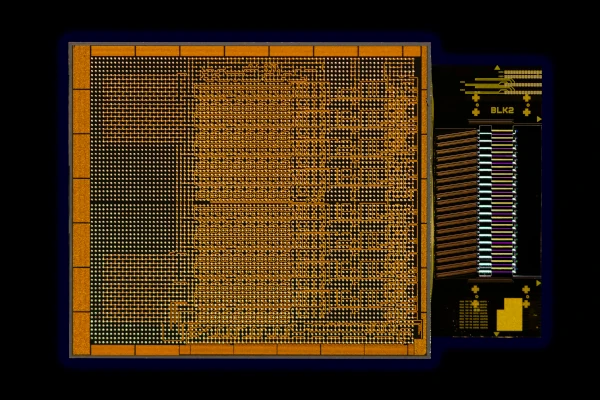

Built to meet the most demanding giant AI workloads, the NVIDIA DGX GH200 combines NVIDIA Grace Hopper Superchip with the NVLink Switch System, uniting up to 256 GPUs in an NVIDIA DGX GH200 system:

To empower the scientists in need of an advanced platform that can solve these extraordinary challenges, NVIDIA paired NVIDIA Grace Hopper Superchip with the NVLink Switch System, uniting up to 256 GPUs in an NVIDIA DGX GH200 system. In the DGX GH200 system, 144 terabytes of memory will be accessible to the GPU shared memory programming model at high speed over NVLink.

Compared to a single NVIDIA DGX A100 320 GB system, NVIDIA DGX GH200 provides nearly 500x more memory to the GPU shared memory programming model over NVLink, forming a giant data center-sized GPU. NVIDIA DGX GH200 is the first supercomputer to break the 100-terabyte barrier for memory accessible to GPUs over NVLink.”

The NVIDIA DGX GH200 will be available at the end of this year and will help solve many AI and HPC challenges.

Although DGX H100 is the most performance-efficient training solution, for deep learning recommendation model (DLRM) with terabytes of embedded tables, a terabyte-scale graph neural network training model, or large data analytics workloads experience a speed increase of 4x to 7x.

DGX GH200 systems can be combined to Scale to further increase processing power:

For scaling beyond 256 GPUs, ConnectX-7 adapters can interconnect multiple DGX GH200 systems to scale into an even larger solution. The power of BlueField-3 DPUs transforms any enterprise computing environment into a secure and accelerated virtual private cloud, enabling organizations to run application workloads in secure, multi-tenant environments.

DGX GH200 offers a full-stack NVIDIA solution. NVIDIA Base Command, comes with an OS optimized for AI workloads, cluster manager, libraries that accelerate compute, storage, and network infrastructure are optimized for DGX GH200 system architecture.

DGX GH200 also includes NVIDIA AI Enterprise, which provides a suite of software and frameworks optimized to streamline AI development and deployment.